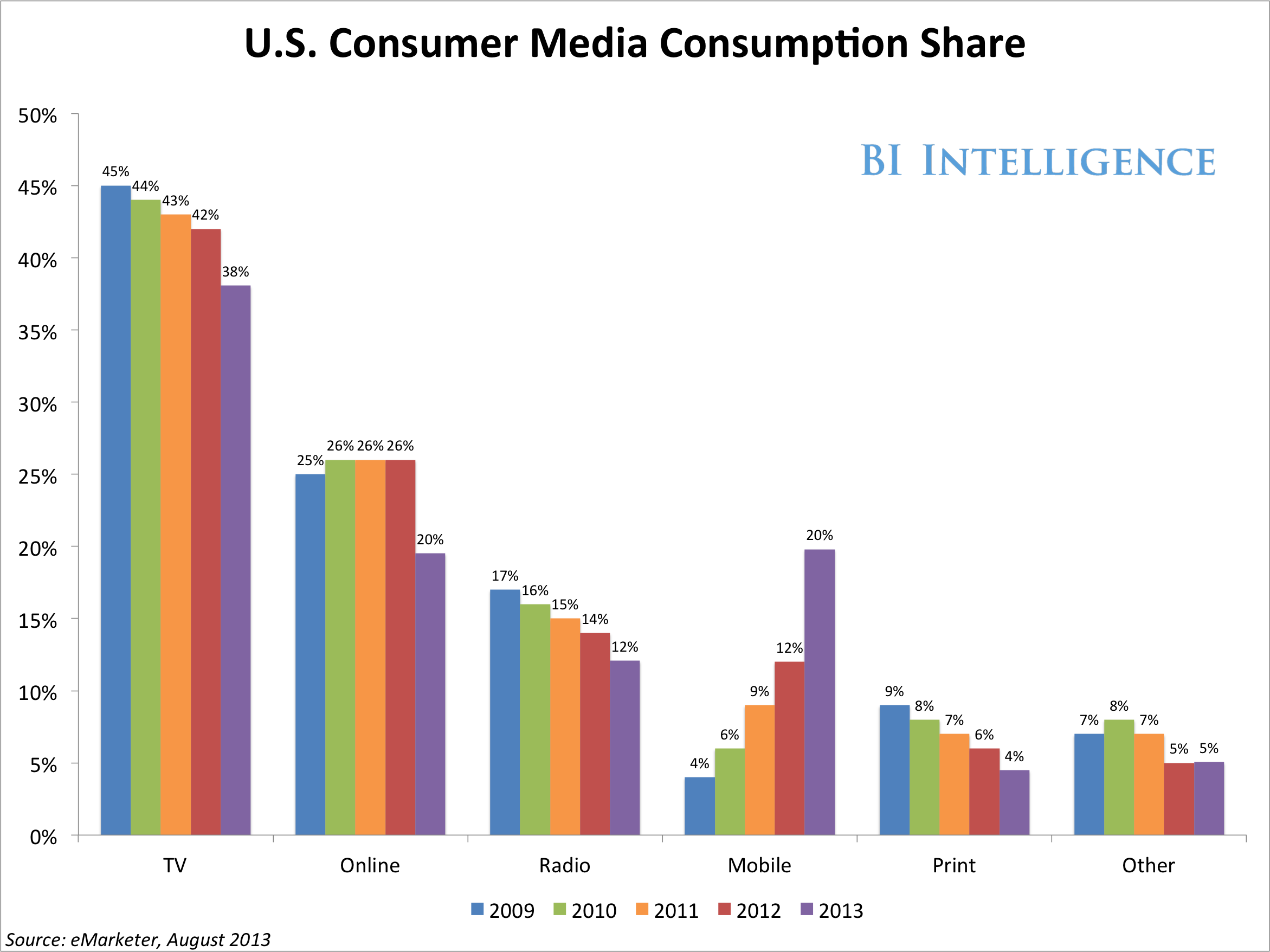

Media consumption is at an all-time high, spurred by the continued growth of mobile media consumption.

U.S. Consumer Media Consumption Share: Source: eMarketer, August 2013

As a result, advertisers and publishers are looking for new ways to connect with consumers through the continued transformation of the media landscape.

Alongside the traditional “first screen” (the TV), use of a “second screen” (the mobile device) continues to grow as we become increasingly attached to our smartphones. Multitasking is also becoming more prevalent as indicated by rising dual screen consumption metrics. According to a 2012 Google research study of smartphone, PC, and TV users, 81% of participants reported using a mobile device while watching television.

Today, most ads on the second screen rely on traditional targeting methods that serve ads to the user based on the context (relevant to media being consumed on the mobile device) or the audience (attributes related to the interest or behaviors of the consumer using the mobile device).

But in order to cut through the clutter and create increased engagement in a multi-screen world, marketers are creating new ways to connect with consumers whose attention is increasingly split across multiple mediums.

At CES 2014, video ad company Innovid announced a partnership with Cisco to create a framework for a new method to target ads to consumers on second screen mobile devices.

The new platform will take a unique approach – targeting ads to users on the second screen based on keywords being mentioned on the first screen. By tapping into microphones on mobile devices, the platform can listen to the TV broadcast (first screen) and serve up mobile ads (second screen) related to the keywords mentioned in the broadcast.

So how will this work? As a consumer watches a broadcast, keywords are identified through the mobile device’s microphone, and ads can be served against those keywords – almost like how Google Display Network targets ads based on contextual keywords used on a webpage. In this case, the words are spoken in the broadcast rather than appearing as on-page text.

For example, if a movie star is on a late night talk show discussing a new film, the user could see an ad on their tablet to purchase tickets for the film.

The new platform will tap into Cisco’s Contexta system which listens to broadcast content and generates metadata about the spoken content in order to serve relevant ads on the second screen. According to the announcement at CES this year, the system will not be limited to the spoken keyword alone, but the context of how the keywords are used will be a factor in ad targeting. Relevance should be high, as the system doesn’t just look at the keywords in a vacuum, but analyses who is saying the keyword, and in what context.

The initial roll out of the platform will likely target users who have installed mobile applications from content providers like cable companies. In order to achieve scale, ads can be served through mobile notifications so that users do not need to have the cable company’s mobile application open in order to be served an ad.

According to Innovid, the initial roll out for the platform will take place by Q3 2014. Technical details haven’t been revealed, but there are likely some hurdles around application permissions, as mobile users would need to allow their device to listen to the content in the background in order for the platform to work. If scale can in fact be achieved, maybe through incentivizing consumers to opt into the program, this might be an interesting method to cut through the clutter and drive personalized engagement in a world with an increasingly fragmented and competitive media landscape.